Data Collection & Analysis

Task Analysis MethodsTask analysis is a method of breaking a piece of work down into smaller elements and examining the relationships between the elements (Gillan, 2012). Task analyses organize information about the work performed so a systematic comparison can be made between the capabilities of a work system and the demands of the work (Drury, 1983). Task analyses are a critical tool for designers in ensuring the system under design mitigates the cognitive and physical demands on a user while her or she pursues his or her goals. Task analyses should be conducted early in the design cycle (Gillan, 2012) to ensure that the system is designed from the beginning to meet the demands of the work for which the system is being designed or redesigned.

|

What is Task Analysis?

Task analysis is a method of breaking a piece of work down into smaller elements and examining the relationships between the elements (Gillan, 2012). For instance, a task can be decomposed into the sequential steps workers take to complete the task, the cognitive processes in which they engage, the tools they employ, and/or situational or contextual forces under which the work is performed. The result can be used to perform a systematic comparison between the capabilities of a work system and the demands of the work or to perform a comparison between experts and novices. This allows designers and analysts to gain insight into where the design of a new system or redesign of an existing system can improve task performance (Drury, 1983).

Authors' note: Some literature refers to a method called Cognitive Task Analysis (CTA) which is simply a task analysis that targets cognitive information. Though, due to the complex nature of cognition, CTA literature often describes pre-packaged combinations of data collection, task description, and analysis techniques known to work for analyzing cognitive work. .

Authors' note: Some literature refers to a method called Cognitive Task Analysis (CTA) which is simply a task analysis that targets cognitive information. Though, due to the complex nature of cognition, CTA literature often describes pre-packaged combinations of data collection, task description, and analysis techniques known to work for analyzing cognitive work. .

Why Use Task Analysis?

The goal of task analysis is to allow a systematic comparison between the capabilities of a work system and the demands of the work such that design requirements are discovered (Drury, 1983). Task analyses are a critical tool for designers because a systematic understanding of the system users, their goals, the steps they will attempt to complete using the system, and situational and contextual elements of the work strengthens a designer’s ability to ensure that the system they are designing or redesigning will effectively allow the users to achieve their goals. Failing to conduct task analyses prior to design may result in the creation of systems that are deficient in meeting the demands of the tasks the user wants to perform.

A task analysis can provide analysts and designers with a wealth of information to guide their efforts. For example, this information can take the form of a list of necessary design requirements, flow-charts of goals and subgoals users strive for in completing a task, information about the probability and severity of errors occurring at certain stages of task completion, a list of learning objectives for training programs, a cognitive demands table, levels of automation in a system, and/or a list of criteria for performance evaluations. Task analysis outputs have been used to develop training and decision aids, preserve corporate knowledge, and identify workstation and interface features that facilitate decision making (Hoffman et al., 1998).

A task analysis can provide analysts and designers with a wealth of information to guide their efforts. For example, this information can take the form of a list of necessary design requirements, flow-charts of goals and subgoals users strive for in completing a task, information about the probability and severity of errors occurring at certain stages of task completion, a list of learning objectives for training programs, a cognitive demands table, levels of automation in a system, and/or a list of criteria for performance evaluations. Task analysis outputs have been used to develop training and decision aids, preserve corporate knowledge, and identify workstation and interface features that facilitate decision making (Hoffman et al., 1998).

When to Use Task Analysis

Task analyses should be conducted early in the design cycle (Gillan, 2012) to ensure the system meets work demands during future design stages. This can prevent costly redesign efforts at later stages of the design cycle. If a prototype or earlier version of the targeted system does not yet exist, instruction manuals or similar systems can be analyzed to the same effect. A task analysis can even be performed on an imaginary system.

Task analyses can be performed on nearly any development schedule because the level of specificity is customizable. A quick, cursory analysis might only describe high level steps (e.g. adding intro, methods, and results sections to write a paper) to a process and only target one or two pieces of information (e.g. error type and severity), whereas a lengthy, deep analysis might break steps into further sub-components (e.g. individual key presses) and record more information at each step. In general, a deep analysis takes more time (unless the process can be efficiently automatized by a computer), but the extra time can yield additional detail which can reveal new design recommendations. The level of specificity should be determined by the researcher based on results of a cost-benefit analysis.

Task analyses can be performed on nearly any development schedule because the level of specificity is customizable. A quick, cursory analysis might only describe high level steps (e.g. adding intro, methods, and results sections to write a paper) to a process and only target one or two pieces of information (e.g. error type and severity), whereas a lengthy, deep analysis might break steps into further sub-components (e.g. individual key presses) and record more information at each step. In general, a deep analysis takes more time (unless the process can be efficiently automatized by a computer), but the extra time can yield additional detail which can reveal new design recommendations. The level of specificity should be determined by the researcher based on results of a cost-benefit analysis.

How to Use Task Analysis

There are three phases to every task analysis: data collection, task description, and task analysis.

Data Collection

Every task analysis begins with data collection about the task of interest and the work system in which the task is performed. Data is often gathered using observation of users performing the tasks, interviews with workers, and reviews of archival data sources like job descriptions, instruction manuals, and/or past performance data (Gillan, 2012). Depending on the goal of the analysis, certain data collection techniques may be more appropriate than others. For example, the Critical Decision Making interview process can be very helpful when performing a task analysis targeting cognitive elements, such as decision making, problem solving, or judgements. Additional details for how to collect data using observations and interviews can be found on their respective pages within this resource.

Task Description

Data collected in the previous step is often very rich with content, and distilling all of the gathered information down to workable conclusions can be a daunting and challenging endeavor.

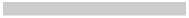

The first step is to create a task lexicon, a description of all of the major terms that the analyst will use in describing the task. Creating clear definitions of these terms allows the analyst to systematically and consistently use the same terminology through the rest of the task analysis and allows other analysts to recreate the task analysis in the future knowing they are using the same terms . Below is an example task lexicon from a task analysis of operating a vending machine (Gillan, 2012):

Data Collection

Every task analysis begins with data collection about the task of interest and the work system in which the task is performed. Data is often gathered using observation of users performing the tasks, interviews with workers, and reviews of archival data sources like job descriptions, instruction manuals, and/or past performance data (Gillan, 2012). Depending on the goal of the analysis, certain data collection techniques may be more appropriate than others. For example, the Critical Decision Making interview process can be very helpful when performing a task analysis targeting cognitive elements, such as decision making, problem solving, or judgements. Additional details for how to collect data using observations and interviews can be found on their respective pages within this resource.

Task Description

Data collected in the previous step is often very rich with content, and distilling all of the gathered information down to workable conclusions can be a daunting and challenging endeavor.

The first step is to create a task lexicon, a description of all of the major terms that the analyst will use in describing the task. Creating clear definitions of these terms allows the analyst to systematically and consistently use the same terminology through the rest of the task analysis and allows other analysts to recreate the task analysis in the future knowing they are using the same terms . Below is an example task lexicon from a task analysis of operating a vending machine (Gillan, 2012):

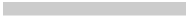

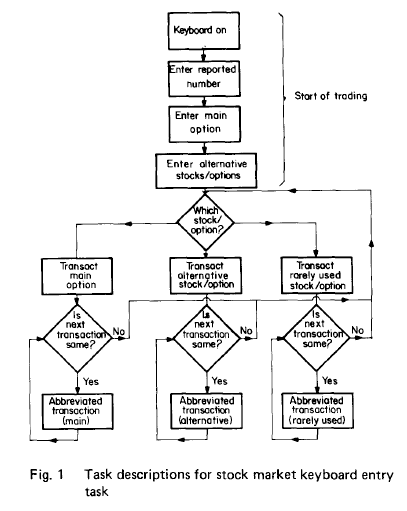

Once a task lexicon has been developed, the next step is to develop a task description. As the name implies, a task description describes the task from start to finish, explicating all of the steps involved in completing the task down to the appropriate level of specificity The task description can take a narrative form or be displayed as a flowchart (Drury, 1983; Gillan, 2012).. Examples of each are provided below (Drury, 1983; Gillan, 2012):

As alluded to above, it is important that task lexicons and descriptions explicate the steps involved in a task to the appropriate level of specificity. The level of specificity depends on the purpose of the task analysis (Gillan, 2012). For instance, a designer of surgical tools may need to document down to every hand gesture a surgeon makes, but this level of specificity would be unwieldy and inefficient for the development of a software training program.

A technique for checking to see whether or not the level of specificity is appropriate is Duncan’s (1974) Progressive Redescription method. This method has the analyst continue to break down tasks into further subtasks until the task can be completed by a user by simply following the task description from start to finish. The analyst observes where errors are likely to occur and how detrimental these errors are to completing the task and then builds in additional task steps until the probability of errors occurring falls below a critical level and no highly damaging errors occur (Drury, 1983).

Note the importance of considering the user as well of the task is epitomized at this stage. For instance, if a computer system was being designed for a group with a high degree of experience, a task step like “Log into computer terminal” may be sufficient. It is unlikely that errors would occur. However, if the system were being designed for users with no experience, it may be necessary to break “Log into computer terminal” down to “Search for Power Button > Press Power Button > Search for User Name field > Click User Name field > Type Name > Search for “Log In” button > Click “Log In” button” in order to reduce the probability of errors to an acceptable level.

Task Analysis

Once a task description has been completed, the next step is to make comparisons between the demands of the task and the capabilities of the system (Drury, 1983). At this stage, the analyst can start identifying mismatches between the task demands and system capabilities and generate design solutions to increase the system’s capabilities to achieve adequate task performance. Below is an example of task analysis for a port of entry operator (Drury, 1983):

A technique for checking to see whether or not the level of specificity is appropriate is Duncan’s (1974) Progressive Redescription method. This method has the analyst continue to break down tasks into further subtasks until the task can be completed by a user by simply following the task description from start to finish. The analyst observes where errors are likely to occur and how detrimental these errors are to completing the task and then builds in additional task steps until the probability of errors occurring falls below a critical level and no highly damaging errors occur (Drury, 1983).

Note the importance of considering the user as well of the task is epitomized at this stage. For instance, if a computer system was being designed for a group with a high degree of experience, a task step like “Log into computer terminal” may be sufficient. It is unlikely that errors would occur. However, if the system were being designed for users with no experience, it may be necessary to break “Log into computer terminal” down to “Search for Power Button > Press Power Button > Search for User Name field > Click User Name field > Type Name > Search for “Log In” button > Click “Log In” button” in order to reduce the probability of errors to an acceptable level.

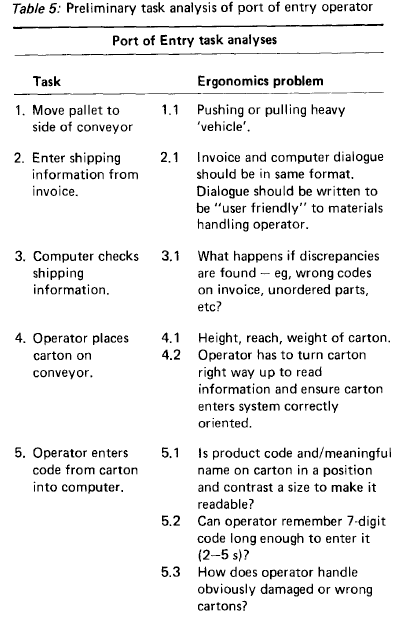

Task Analysis

Once a task description has been completed, the next step is to make comparisons between the demands of the task and the capabilities of the system (Drury, 1983). At this stage, the analyst can start identifying mismatches between the task demands and system capabilities and generate design solutions to increase the system’s capabilities to achieve adequate task performance. Below is an example of task analysis for a port of entry operator (Drury, 1983):

There are many different methods that can be employed for making this comparison. Below are detailed several methods that have been used by analysts in the past. Analysts can sample from these methods to conduct their task analyses in the way that best organizes the information for their design effort.

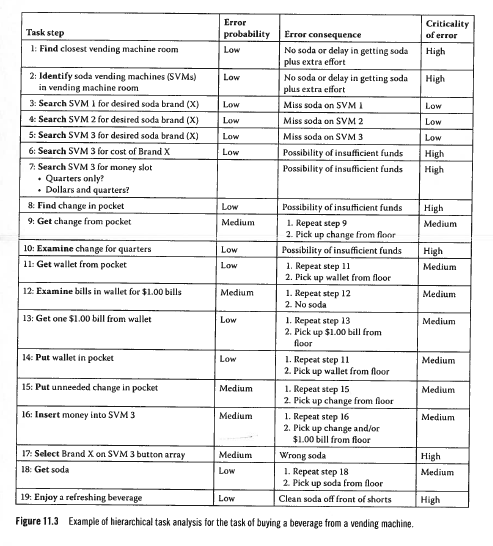

Heirarchical Task Analysis (HTA). HTA decomposes a goal into a series of subgoals and task components. Additional information is presented for each step, including potential errors, the probability that the error will occur at this step, the severity of the error, and/or possible design solutions to reduce the probability the of the error occurring (Gillan, 2012). Below is an example HTA for using a vending machine (Gillan, 2012).

Goals, Operators, Methods, and Selection Rules (GOMS) Modeling. GOMS modeling breaks the task into goals or subgoals (Goals). With the goals in mind, the analyst identifies the behaviors (physical or cognitive) that the worker uses to complete a subgoal (Operators). The analyst then looks for sequences of commonly occurring operators (Methods) and the rules a user employs to select among competing methods (Selection Rules). Sometimes the analyst also includes the time it takes to compete certain methods or operators, allowing them to design the system to achieve the users goals in the minimum amount of time (Gillan, 2012).

Module method. This task analysis method breaks the goal of the user into subgoals. Within each subgoal, the analyst identifies the behavior the user employs to achieve the subgoal, the information needed by to user to complete the behavior, and the consequence of the behavior. This is a particularly helpful methods for identifying how well the user receives feedback from the system after engaging in the behavior. The system should make the consequence of the behavior readily perceivable to the user (Gillan, 2012).

PRONET. PRONET analyzes the relatedness of behaviors to complete a task by comparing how often one task follows another task sequentially. It then creates a matrix of the relative probabilities that a task from the task lexicon will follow another task compared to other tasks in the lexicon. A computer program called Pathfinder is then used to analyze this matrix, creating a visual network that groups the most commonly co-occurring behaviors together. This method can be useful for design because designers should make their product in such a way that the behaviors that the most common sequences of actions can be completed quickly and easily (Gillan, 2012). For instance, if the designer of an automobile finds that there is high probability that users attempt to roll-up power windows after removing the key from the ignition, designing-in a period of time where this function remains available removes the need to reinsert the key in order to achieve this goal.

Applied Cognitive Task Analysis (ACTA). ACTA elicits and represents cognitive components of skilled task performance and provides the means to transform data into design recommendations. The process is four-fold. First, a task diagram elicits a broad overview of the task by listing task steps and identifying the difficult cognitive elements at each step. Second, a knowledge audit identifies ways in which expertise is used in a domain and provides examples based on actual experience. Expertise is described by these skills: diagnosing and predicting, situation awareness, perceptual skills, developing and knowing when to apply tricks of the trade, improvising, metacognition, recognizing anomalies, and compensating for equipment limitations. Probing questions are used to reveal the nature of these skills, specific events where they were required, strategies that have been used, and so forth. Third, a simulation interview helps the experimenter better understand the involved cognitive processes within the context of an incident. Major events, including judgements and decisions, are recorded from the task description/scenario. Fourth, information from the previous three steps are synthesized by the researcher to produce a cognitive demands table containing design recommendations. The cognitive demands table focuses the analysis on project goals, which could include difficult cognitive elements, why they were difficult, common errors, and cues and strategies used (Militello & Hutton, 1998).

Module method. This task analysis method breaks the goal of the user into subgoals. Within each subgoal, the analyst identifies the behavior the user employs to achieve the subgoal, the information needed by to user to complete the behavior, and the consequence of the behavior. This is a particularly helpful methods for identifying how well the user receives feedback from the system after engaging in the behavior. The system should make the consequence of the behavior readily perceivable to the user (Gillan, 2012).

PRONET. PRONET analyzes the relatedness of behaviors to complete a task by comparing how often one task follows another task sequentially. It then creates a matrix of the relative probabilities that a task from the task lexicon will follow another task compared to other tasks in the lexicon. A computer program called Pathfinder is then used to analyze this matrix, creating a visual network that groups the most commonly co-occurring behaviors together. This method can be useful for design because designers should make their product in such a way that the behaviors that the most common sequences of actions can be completed quickly and easily (Gillan, 2012). For instance, if the designer of an automobile finds that there is high probability that users attempt to roll-up power windows after removing the key from the ignition, designing-in a period of time where this function remains available removes the need to reinsert the key in order to achieve this goal.

Applied Cognitive Task Analysis (ACTA). ACTA elicits and represents cognitive components of skilled task performance and provides the means to transform data into design recommendations. The process is four-fold. First, a task diagram elicits a broad overview of the task by listing task steps and identifying the difficult cognitive elements at each step. Second, a knowledge audit identifies ways in which expertise is used in a domain and provides examples based on actual experience. Expertise is described by these skills: diagnosing and predicting, situation awareness, perceptual skills, developing and knowing when to apply tricks of the trade, improvising, metacognition, recognizing anomalies, and compensating for equipment limitations. Probing questions are used to reveal the nature of these skills, specific events where they were required, strategies that have been used, and so forth. Third, a simulation interview helps the experimenter better understand the involved cognitive processes within the context of an incident. Major events, including judgements and decisions, are recorded from the task description/scenario. Fourth, information from the previous three steps are synthesized by the researcher to produce a cognitive demands table containing design recommendations. The cognitive demands table focuses the analysis on project goals, which could include difficult cognitive elements, why they were difficult, common errors, and cues and strategies used (Militello & Hutton, 1998).

References & Resources

References

- Duncan (1974). Analysis techniques in training design. In E. Edwards, F.P. Lees (Eds.) The human operator in process control. London: Taylor & Francis.

- Drury, C. G. (1983). Task analysis methods in industry. Applied Ergonomics, 14(1), 19-28.

- Gillan, D. J. (2012). Five questions concerning task analysis. In M. A. Wilson, W. R. Bennett, S. G. Gibson, G. M. Alliger (Eds.) , The handbook of work analysis: Methods, systems, applications and science of work measurement in organizations (pp. 201-213).

- Hoffman, R. R., Crandall, B., & Shadbolt, N. (1998). Use of the Critical Decision Method to Elicit Expert Knowledge : A Case Study in the Methodology of Cognitive Task Analysis. Human Factors, 40(2), 254–276.

- Militello, L. G., & Hutton, R. J. (1998). Applied cognitive task analysis (ACTA): a practitioner’s toolkit for understanding cognitive task demands. Ergonomics, 41(11), 1618–1641. doi:10.1080/001401398186108

- John, B. E., & Kieras, D. E. (1996). The GOMS family of user interface analysis techniques: Comparison and contrast. ACM Transactions on Computer-Human Interaction (TOCHI), 3(4), 320-351.